Shelly Palmer (aka Mr. CES) is wrong. Yesterday, he declared in his daily missive that his vote for 2017 “best in show” was the Mercedes-Benz Vision Van. My retort: “Shelly, go outside the North Hall to the Gold Parking Lot and take a ride on NVIDIA’s self-driving car.”

I do agree with Shelly’s wisdom that the Mercedes concept van “sums up all the technologies that are on display at CES this year in one neat package. It’s autonomous, it delivers on-demand and it uses machine learning to accomplish its tasks.” However, the operative word from a robotics standpoint is “concept,” as realists we are more excited by kick-the-tires demos like the NVIDIA one below.

Standing on the windy parking lot track, I personally witnessed (and captured) three adventurous technology executives hand over their lives to NVIDIA’s AI chip. To me, this demo sums up the CES 2017 experience best – humans are ready to give the keys to the machines.

I am not alone, Gary Shapiro, the CEO of the Consumer Technology Association that hosts CES, exclaimed that “NVIDIA plays a central role in some of the most important technology forces changing our world today. Its work in artificial intelligence, self-driving cars, VR and gaming puts the company on the leading edge of the industry.”

NVIDIA, a graphics chip company that is probably best known for gaming, has leveraged its knowledge base to develop new computer vision deep learning microprocessors to grow its marketshare beyond gaming to AI and connected cars. The company has been rewarded handsomely on Wall Street with its stock value booming 230% this past year. NVIDIA is just one of many examples that has driven the growth of the Autonomous Vehicle Marketplace at CES by over 75% since its inception in 2014.

Yesterday, NVIDIA’s CEO, Jen-Hsun Huang, spent a considerable amount of time in his CES keynote address proclaiming the benefits of utilizing neural network systems for teaching computers to drive, “deep learning has made it possible to crack that nut. We can now perceive our environment surrounding our car; we can predict using artificial intelligence where other people and cars will be.”

Huang, partnered with Audi, unveiled NVIDIA’s new computing driving technology, Xavier, that runs its own Driveworks operating system. The processing unit contains eight ARM64 cores and boasts performance of 30 trillion operations per second.

According to Huang, “these AI connected cars should be able to drive from address to address in nearly every part of the world.” Recognizing the shortcoming of present learning structures, Huang declared that Driveworks can also work out when it has low confidence in its self-driving ability to handoff driving to the human co-pilot.

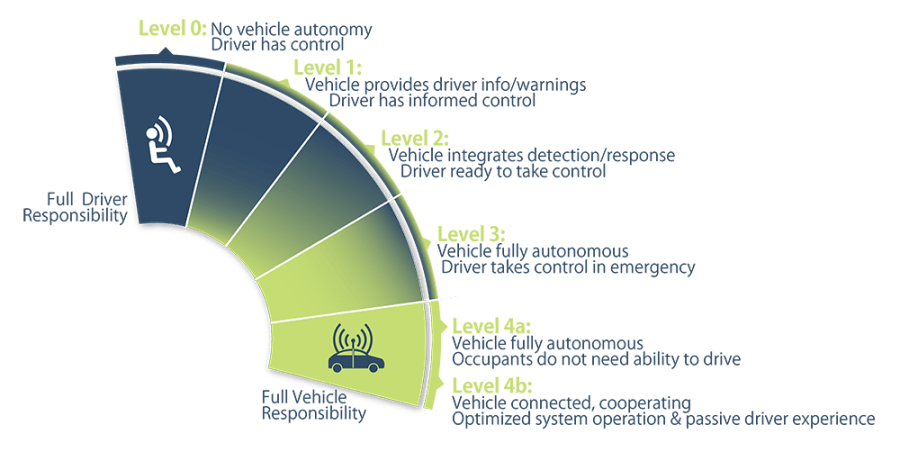

A day earlier Dr. Gil Pratt of Toyota Research Institute (TRI) stated that the most dangerous aspect of self-driving systems may be in the handoff of computer-to-human or level 2 autonomy, “Considerable research shows that the longer a driver is disengaged from the task of driving, the longer it takes to re-orient. When someone over-trusts a level 2 system’s capabilities they may mentally disconnect their attention from the driving environment and wrongly assume the level 2 system is more capable than it is. We at TRI worry that over-trust may accumulate over many miles of handoff-free driving.”

According to Pratt, “TRI has been taking a two-track approach, simultaneously developing a system we call Guardian, designed to make human driving safer…while working on L4 and 5 systems that we call Chauffeur. The perception and planning software in Guardian and Chauffeur are basically the same. The difference is that Guardian only engages when needed, while Chauffeur is engaged all of the time during an autonomous drive. In Guardian, the driver is meant to be in control of the car at all times except in those cases where Guardian anticipates or identifies a pending incident and briefly employs a corrective response.” In a sense, Toyota is skipping NVIDIA’s and Tesla’s auto-pilot controls and going from Level 1 to full autonomy. But when will this be available?

Haung definitively stated yesterday, “we’ll have cars on the road by 2020.” This date has been echoed by many other leading voices in the auto and software industry. Last summer, Ford Motor Co-Chief Executive Mark Fields, said they “plan to offer a fully automated driverless vehicle for commercial ride-sharing in 2021.” Yesterday, I saw firsthand the autonomous Ford Fusion concept car (notice the attractive dual LIDAR installations above the mirrors). Ford said it expects to deploy 30 of these self-driving Fusion Hybrid prototypes in 2017 and 90 the following year, but admitted that “there’s still a lot of engineering development between now and 2021.”

Pratt is less optimistic than most in the industry, “all car makers are aiming to achieve level 5, where a car can drive fully autonomously under any traffic or weather condition in any place and at any time. I need to make this perfectly clear: This is a wonderful goal.However, none of us in the automobile…or IT industries are close to achieving true level 5 autonomy. It will take many years of machine learning and many more miles than anyone has logged of both simulated …and real-world testing to achieve the perfection required for Level 5 autonomy.”

Walking around the Convention Center and Sands Eureka Park Expo some other notable trends stood out as important flags for the coming year:

Walking around the Convention Center and Sands Eureka Park Expo some other notable trends stood out as important flags for the coming year:

- Virtual Reality is last year’s drone fad: It appeared that every space formerly occupied by hobby drone companies has been filled this year by Oculus or Samsung Gear knockoffs (even the Verge got into the act by handing out pink cardboard VR headsets at JFK for flights bound for Vegas).

- EVE Robot is the new Alexa: As predicted, the age of social robots is here; in fact, it started with Siri however what I didn’t anticipate is that all of the industrial designers would watch the same Pixar film (see LG examples above). While physically adorable, the value proposition has yet to be fully fleshed out as most offerings are just better packaging of Alexa.

- Worlds are colliding, and crowds are growing: Talking of Alexa, she’s everywhere even in the latest Ford models this year. Does this mean she is like the NSA listening to our every conversation? In any case, CES this year was a monster year, bigger than anyone expected. Even Mr. CES admitted yesterday that he couldn’t get near some booths. [By the way, if you would like to meet Shelly Palmer, please come to my dinner with him this March]

To all my fellow CES attendees, see you next year… to all those that stayed home, now do you have FoMA?

How will the manufacturers of self-driving vehicles prevent the various sensors from being jammed or given false information that will affect their performance and potentially put the passengers at risk?

Devices that sense the outside world are not immune from disruption … please recall that hackers are able to take over a car’s computerized systems and control the vehicle.

One can envision a person carrying a small device that, when activated, feeds false signals to a car’s sensors.

Hmmm … reminds one of those kits one could buy and assemble to disrupt (analog) T.V. reception …

Dr. Dan – I refer you to my previous post about cybersecurity and transportation: https://robotrabbi.com/2016/12/02/hacked/

How was Shabbat in Vegas ?

Remember , bring nothing back except some souvenirs for the twins.

Sent from my iPhone

>

Well done – that article – R.R.! WHO (what entity) will be held liable for damages caused when one of their systems gets hacked and someone or something is damaged? Has the insurance industry responded to this situation?

This is a good question, and worthy of a deeper response in a future post. The short answer is insurance companies tend to treat these events like natural disasters, unless there is a separate rider for coverage. Obviously, as more machines become autonomous liability issues will need to evolve.

How does one justify classifying people-made problems as natural disasters? Perhaps, as we are considered products of nature, disasters caused by our minds/hands would, therefore, be considered natural disasters.

Perhaps the liability could be considered under tort law: “A wrong that is committed by someone who is legally obligated to provide a certain amount of carefulness in behavior to another and that causes injury to that person, who may seek compensation in a civil suit for damages.”

One can predict that insurance premiums will increase for every driver to accommodate the payouts caused by the failure of and the hacking into self-driving vehicles and the related automotive technology software. Perhaps insurers should have specific riders targeting the users of those technologies. And, importantly, will there be enough trained and skilled techs. available to repair and keep the electronic systems working? And, who will be liable when there errors cause an accident?

Are we well into PILPUL?

Correction to: And, who will be liable when there errors cause an accident? Their … not there … actions …

Dr. Dan see this week’s post, inspired by our conversation above: https://robotrabbi.com/2017/01/13/alexa/

Wow! Well done – that article!

I want to be autonomous too – only in the way the Merriam-Webster dictionary defines the word: “… existing or acting separately from other things or people.”

One can recall that U.S. citizens became somewhat outraged when news of the NSA’s monitoring of all electronic communications was released.

And one could (should be) be curious (concerned) about how the incoming administration and congress will deal with the weighty issues addressing a citizen’s privacy versus business interests.

Will there be a revised version of Miranda Warning? Such as:

“You have the right to remain silent. Anything you say to Alexa (et-al) or me can and will be used against you in a court of law. You have the right to an attorney. If you cannot afford an attorney, one will be provided for you or you can choose Alex. Do you understand the rights I have just read to you? With these rights in mind, do you wish to speak to me … or to Alexa?”

Of course, with the ongoing development of brain-wave monitoring and interpretation, that warning, in the future, will have to include the statement: ‘Anything you say to me or Alexa, or think, will be used against you …’

Say — wasn’t there a movie … ?

Speaking of Google – I summoned this idiom and its definition: ‘Be careful what you wish for’ If you get things that you desire, there may be unforeseen and unpleasant consequences.

P.S. I enjoy summoning Google from across the room, verbally, at random times, into my spouse’s cell-phone.

Nice blog, thank you